Streamlining Schema Management: Confluent Relocates Schema IDs to Kafka Headers

Confluent has introduced a pivotal enhancement to Apache Kafka that shifts schema identifiers from message payloads into record headers. This architectural change is designed to decouple metadata from data, making schema governance more straightforward and evolution more flexible. By integrating closely with the Schema Registry, the update ensures greater compatibility across various serialization formats and reduces the tight coupling between data and its descriptive metadata in event-driven systems.

What exactly did Confluent change in Apache Kafka regarding schema IDs?

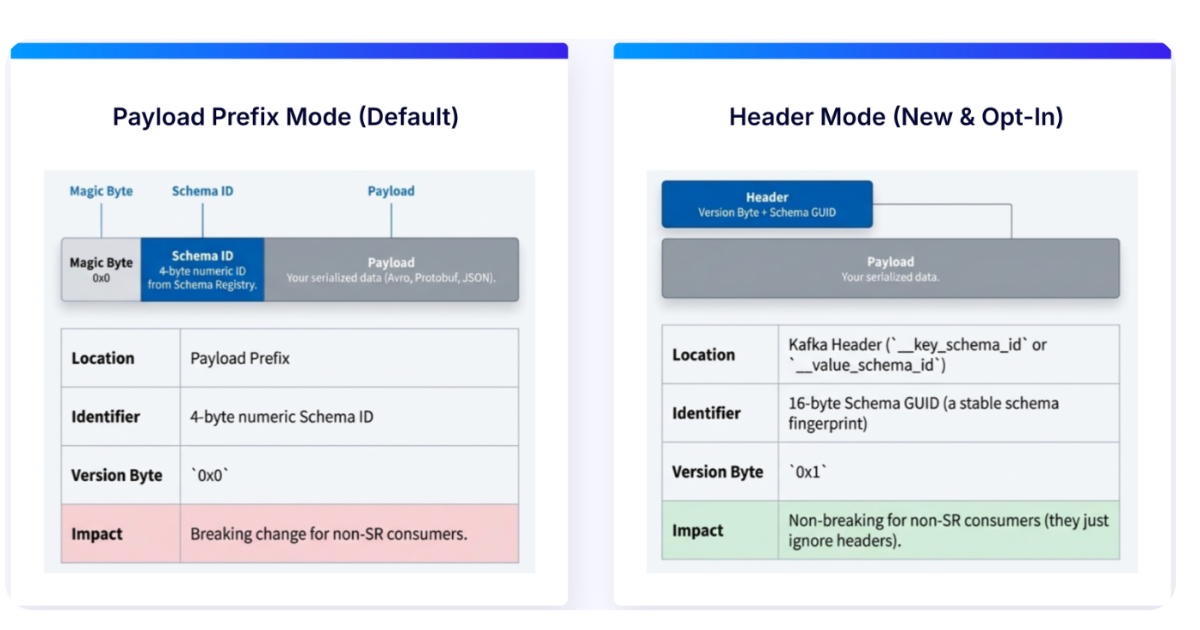

Confluent moved the schema ID that was traditionally embedded inside the message payload to the Kafka record headers. Previously, the schema ID was part of the serialized data, requiring consumers to deserialize the payload before accessing the schema identifier. Now, the schema ID resides in the record header, which is metadata attached to each message. This separation means that the payload itself remains pure data, while the schema information is kept separately in headers. The change leverages Kafka’s native header support, making schema identification accessible without needing to parse the entire message body.

Why does moving schema IDs to headers simplify schema governance?

Schema governance involves managing schema versions, compatibility rules, and ensuring that producers and consumers agree on data formats. With schema IDs in headers, governance tools like Schema Registry can directly inspect the header to determine which schema version applies, without touching the payload. This separation simplifies enforcement of compatibility policies because the schema identifier is always available as metadata. It also makes it easier to audit schema usage, track version migrations, and implement schema evolution strategies without altering the data format. The reduced coupling means that downstream systems can handle schema changes more independently and with less risk of breaking existing consumers.

How does this update affect compatibility across different serialization formats?

By moving schema IDs to headers, Confluent improves cross-format compatibility. Different serialization formats—such as Avro, Protobuf, or JSON Schema—often handle schema IDs in their own way, sometimes embedding them in the payload structure. With headers, the schema ID becomes format-agnostic. A consumer can read the header to know which schema version to use, regardless of whether the payload is Avro, Protobuf, or anything else. This unification reduces the need for format-specific logic to extract the schema ID. It also enables hybrid environments where different topics might use different serialization formats, all governed by the same Schema Registry.

What does “reducing coupling between data and metadata” mean in this context?

In event-driven architectures, data and its descriptive metadata (like schema version, topic name, or encoding type) were often combined in the message payload. This created a tight coupling: any change to the metadata required reprocessing the entire message. By moving schema IDs to Kafka headers, metadata is physically separated from the payload data. This decoupling allows evolving schema versions independently from the data. For example, you can update a schema’s compatibility rules without modifying existing payloads. It also means that tools can inspect or filter messages based on metadata without deserializing the data, reducing processing overhead and enabling better stream processing.

/presentations/game-vr-flat-screens/en/smallimage/thumbnail-1775637585504.jpg)

How does the update integrate with Schema Registry?

The change is tightly integrated with Confluent’s Schema Registry. The registry already stores all schema versions and their compatibility rules. With schema IDs in headers, Schema Registry can directly reference the header value to fetch the corresponding schema. This eliminates the need for serializers to embed the ID inside the payload. The Registry still handles validation, storage, and evolution checks, but now it can do so by examining headers instead of parsing serialized data. Producers and consumers using the Confluent serializers automatically write and read schema IDs from headers when configured, making the integration seamless. This alignment simplifies the overall schema management flow.

What are the key benefits for event-driven architectures from this change?

Event-driven architectures rely on loose coupling between services. By moving schema IDs to headers, Confluent further decouples the schema evolution process from the data itself. This enables services to evolve their schemas independently as long as compatibility is maintained. It also improves performance because consumers can quickly check the schema ID without deserializing the entire payload. Additionally, it enhances observability—monitoring and logging tools can report on schema usage by examining headers. Overall, the change reduces complexity in managing schema lifecycles, making it easier to implement safe schema changes in production while preserving the flexibility that event-driven systems require.