Building a Smarter Advertising System with Multi-Agent Architecture: A Step-by-Step Guide

Introduction

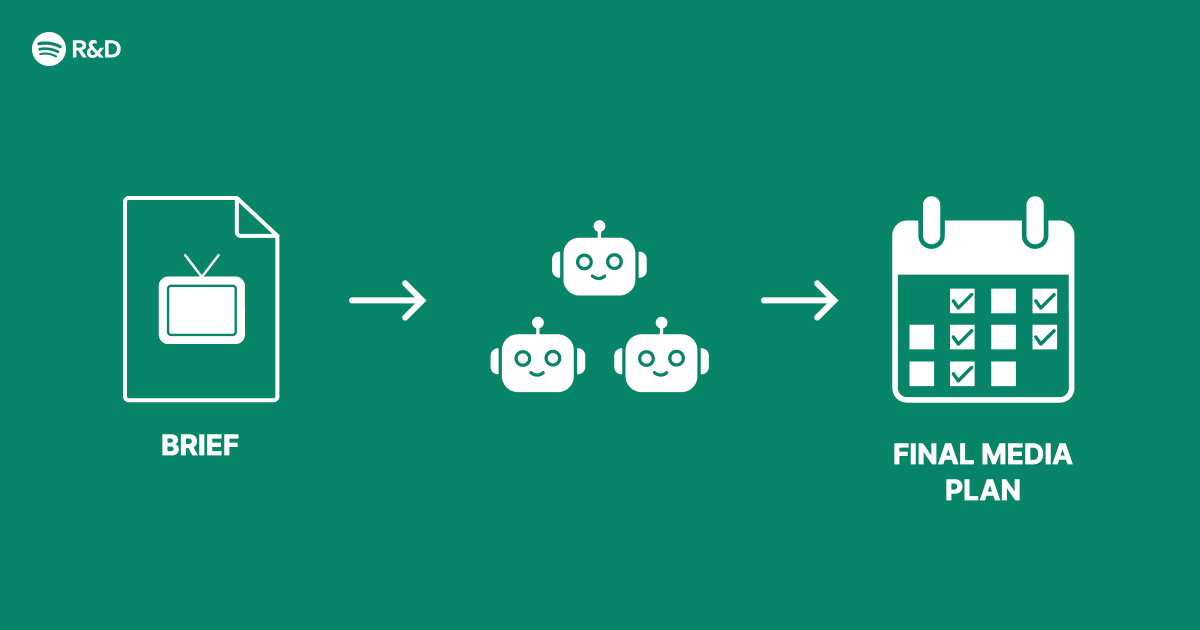

When Spotify Engineering set out to overhaul their advertising platform, they didn't just bolt on a new AI feature—they reimagined the structural foundation. The result was a multi-agent architecture that coordinates specialized AI agents to make smarter, more adaptive ad decisions. This guide walks you through the same core principles and implementation steps, from framing the problem to deploying a system where multiple agents collaborate for better budget allocation, creative selection, and user engagement. By the end, you'll have a practical blueprint for building your own multi-agent advertising engine.

What You Need

- Technical prerequisites: Familiarity with machine learning (especially reinforcement learning), Python programming, and distributed systems concepts.

- Data infrastructure: Access to historical ad impression logs, user interaction data, and campaign performance metrics. A data pipeline (e.g., Kafka, Spark) for real-time feeds.

- Computing resources: A cluster or cloud environment (AWS, GCP) for training and serving multiple agents simultaneously.

- Frameworks & tools: A deep learning library (PyTorch or TensorFlow), a message broker (e.g., RabbitMQ) for inter-agent communication, and a monitoring stack (Prometheus, Grafana).

- Team: Data engineers, ML engineers, and domain experts in programmatic advertising (DSPs, SSPs, RTB protocols).

Step-by-Step Implementation Guide

Step 1: Define the Advertising Problem as a Multi-Agent System

Start by breaking down the monolithic ad decision process into distinct sub-problems. Typical tasks include budget allocation across campaigns, ad creative selection for each impression, bid price optimization in real-time auctions, and frequency capping. Map each sub-problem to an autonomous agent that can learn and act independently. For example: a Budget Agent manages daily spend per campaign; a Creative Agent picks the best ad variant; a Bid Agent determines the offer price; and a Frequency Agent limits how many times a user sees the same ad. These agents share a common environment (the ad market) but have different reward functions aligned with overall business goals (e.g., maximize ROI, minimize cost per conversion).

Step 2: Design the Agent Communication Protocol

Agents must coordinate without stepping on each other's toes. Choose a centralized communication pattern (via a mediator) or a decentralized peer-to-peer approach. In Spotify's architecture, agents communicate through a shared state ledger that records decisions and outcomes. Implement a lightweight message format (e.g., JSON over a message queue) so each agent can publish its intended action and read others' actions. For instance, the Budget Agent might announce: "I've allocated $200 to Campaign A today." The Bid Agent then uses that constraint to avoid overbidding. Use a conflict resolution mechanism (e.g., a priority matrix) when agents' goals clash—such as when the Creative Agent wants a risky new ad but the Bid Agent is conservative.

Step 3: Choose a Learning Paradigm for Each Agent

Not all agents need the same learning algorithm. Use reinforcement learning (RL) for agents that operate in dynamic, competitive environments (like bidding). Use supervised learning or bandit algorithms for agents that select from a fixed set of actions (like creative selection). For budget management, PID controllers or proportional-integral-derivative logic can work initially, but transition to RL for better long-term optimization. Ensure each agent's reward function is a local approximation of the global objective. For example, the Bid Agent's reward could be a function of win rate and profit margin, while the Creative Agent's reward is click-through rate (CTR).

Step 4: Build Shared Infrastructure for Training and Serving

Create a centralized training pipeline that aggregates experiences from all agents. Use a replay buffer to store state-action-reward tuples from real ad auctions. Train agents offline on historical data first, then deploy them in a shadow mode (logging decisions without affecting live traffic) to validate performance. For serving, containerize each agent as a microservice (e.g., Docker + Kubernetes) so they can scale independently. Set up a feature store (e.g., Feast) to provide real-time features like user demographics, device type, and context (time of day, location). Ensure low-latency inference (under 50ms) by using optimized models (ONNX, TensorRT) and edge deployment if needed.

Step 5: Implement a Global Coordinator or Meta-Agent

To prevent agents from suboptimizing locally, introduce a meta-agent that monitors overall system performance (e.g., total revenue, average CPA). The meta-agent can impose soft constraints—like adjusting reward weights for individual agents—or trigger periodic re-training when KPIs drift. In Spotify's architecture, this role was played by a rule-based orchestrator that also handled fallback logic if an agent fails. Simulate the multi-agent system in a custom environment (using libraries like PettingZoo) to test coordination strategies before production rollout.

Step 6: Integrate with Real-Time Bidding (RTB) Pipelines

Connect your agents to the ad exchange via RTB protocols (OpenRTB). The Bid Agent must respond to bid requests within milliseconds. Design a lightweight decision loop: receive bid request → retrieve user features → query the Creative Agent for best ad → query the Bid Agent for price → query the Frequency Agent to check cap → send bid response. Use caching (e.g., Redis) for frequently used features and decisions. Implement fallback defaults (e.g., a heuristic bidding baseline) in case an agent's inference times out.

Step 7: Monitor, Evaluate, and Iterate

Set up dashboards for each agent's performance (e.g., bid win rate, creative CTR, budget utilization rate). Use A/B testing at the system level: compare the multi-agent system against a single monolithic model. Track business metrics like overall revenue, cost per acquisition, and user ad fatigue. Implement automated rollback in the CI/CD pipeline if key metrics degrade by more than 5% in a canary deployment. Continuously feed new data into the training pipeline—agents should be retrained daily or weekly to adapt to seasonality and market changes.

Tips & Best Practices

- Start simple: Begin with two agents (e.g., budget + bid) and gradually add more. Over-engineering early coordination can obscure learning signals.

- Use simulation for rapid prototyping: Build a simplified ad marketplace (with synthetic users and competitors) to test agent interactions before touching real data. This speeds up debugging and helps identify emergent behaviors.

- Align incentives carefully: If agents' reward functions are too narrow, they may game the system (e.g., the Creative Agent might show the same ad repeatedly to boost its own CTR while hurting user experience). Include penalties for undesirable side effects in the reward design.

- Watch for computation costs: Running multiple RL agents in real time can be expensive. Consider compressing models (e.g., quantization) or using offline-policy evaluation to reduce live experimentation.

- Document agent decisions: In a multi-agent system, debugging is harder. Log every decision along with the agent's state and reasoning. Use tools like MLflow or custom decision audit trails to trace back a bad outcome to a specific agent's misstep.

- Phase out rule-based fallbacks gradually: As agents prove their reliability, reduce the rule-based overrides. But always keep a simple fallback for critical failures (e.g., if all agents crash, use a hardcoded safe bid).

By following these steps, you can build a multi-agent advertising system that, like Spotify's, is smarter, more adaptable, and structurally sound—not just another AI feature bolted onto legacy code.